Zero Trust

Zero Trust is a powerful conceptual security framework that promotes the idea of not automatically trusting any actor, regardless of their location or network environment. It is designed to protect against internal and external threats even if they are within the network perimeter. It advocates for continuous verification and validation of actor identity, as well as strict enforcement of access controls and policies.

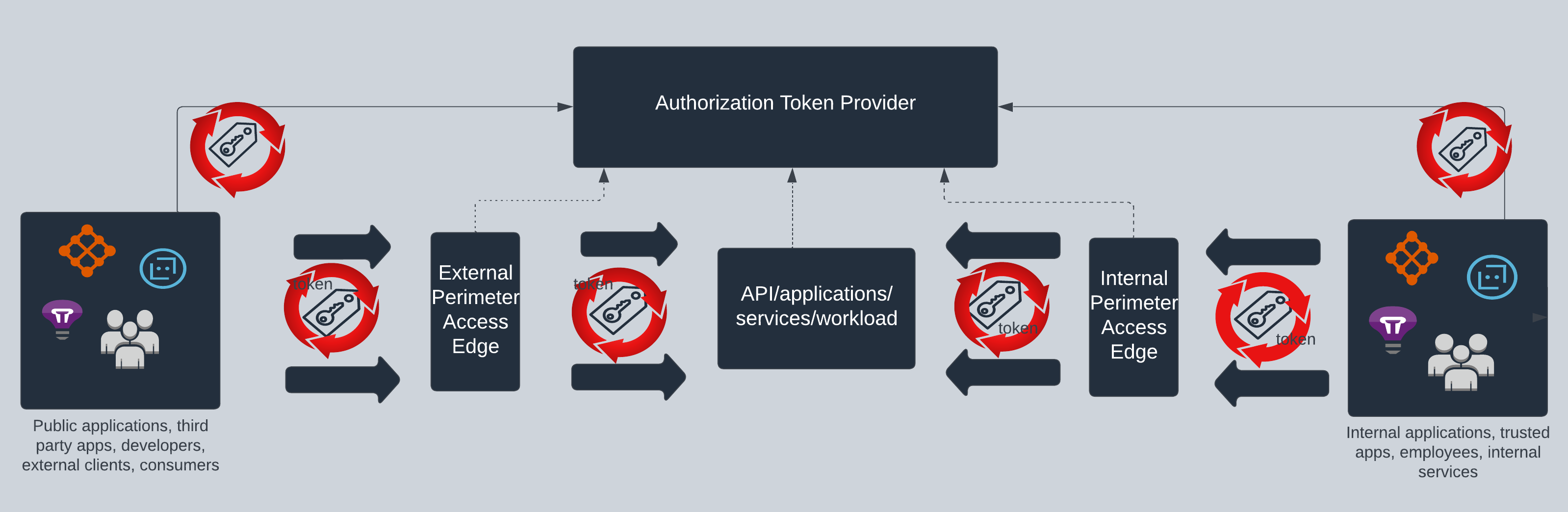

Applications are vulnerable in any organization and are the main target for breaches. Network (aka perimeter) security is only one of the faces of the Zero Trust paradigm and applications need to be continuously protected as well. Token-based architecture is quite prevalent for API and application access and today we will take a look at how we can weave the Zero Trust principles into such architectures to reduce the attack surface and enhance security. Many organizations have kept Zero Trust efforts at or near the starting line and it’s time to take it all the way deep into application architecture.

Zero Trust in Token Based Architecture

The concept and usage of the zero trust architecture pattern varies widely based on the systems that utilize it. Today our focus is on the token, which represents an authorization grant used to access resources and the token issuer/provider capabilities. Tokens are often heavily used in authentication and authorization processes to obtain access to specific resources, services, applications, APIs, workloads etc. You may be familiar with different protocols and mechanisms that create their own flavor of tokens, such as OAuth, OIDC, SAML, API Key, JWT, WS-Fed, proprietary tokens, sso tokens that flow across your applications, services and workloads within your infrastructure. Standardizing and unifying the token landscape to a modern, well adopted approach is critical to visibility, enforcement and flow control within application architectures.

In a token-based architecture, the attack surface is the token and how the token is being created, verified and used across the applications. It is quite common that in major organizations, a token is obtained and used for the lifetime of that token across various applications of different sensitivity and criticality. Once you are in the system, then you are accessing all applications using the same token. This is where the attack surface of a vulnerable token is very high and we should mitigate such practices using different mechanisms. Let’s dive into some of the key aspects to embrace the Zero Trust principles in a token-based architecture.

Identity Verification

Every user or entity must be authenticated before receiving a token. For users, this is traditionally obtained using methods such as passwords, multi-factor authentication, or biometrics and once the user’s identity is verified, a token is issued. From an application ecosystem perspective, identity does not just belong to a user, it could be a device, a workload, service, applications etc. that has to identify themselves to obtain a token to access other resources. This does not imply that you need to have an identity provider in house, it’s quite common that the perimeter of user identity has expanded, and it could be identity from another organization or a social identity that needs to be trusted by your organization before issuing a token. This means the token provider must be flexible enough to consume its own identities as well as from other sources, establish trust, and also have flexibility to run additional checks before issuing an actor token.

Token Integrity

Once an actor is identified and verified, it is very important to produce a signed tamper proof token for usage by subsystems that are trusting the token to serve its resources. The token provider system must act as the authoritative issuer and must issue a token that is signed and tamper proof. This system should also support mechanisms to verify the authenticity, integrity and validity of the issued tokens in a performance-efficient manner. Token integrity is very critical and the providers should have the capability to revoke signing keys on demand as well on a schedule. Producing a signed token itself is not enough capability for a provider, the system should have support for symmetrical and asymmetrical algorithms and also the capability to limit, revoke and publish a list of keys to verify signatures etc.

Continuous Verification

Traditional authentication methods, such as passwords, are often a one-time event. In a Zero Trust token-based architecture, continuous verification mechanisms must be employed to re-validate the token and ensure that the user’s access is still valid. This can include factors like time-based expiration, device trust scores, or user behavior analysis. And there should be easy mechanisms to easily intercept failures and provide users with experience to re-verify as required. This also plays into the fact that cached tokens may be susceptible to attacks if they are not re-verified, so checking the token validity frequently with a provider is recommended to ensure the token is still honored. This implies the provider should have highly performant mechanisms to support high-scale token validation.

Dynamic Authorization

Zero Trust requires ongoing verification of the actor’s authorization token for specific resources, which can be achieved by using fine-grained access control and policies. Enforcement of these is traditionally done at resource level with tokens normally containing information about the user’s permissions or roles, which can be evaluated in real-time to determine whether the user should be granted access. More modern ways are pushing this authorization capability to even further left to issue more fine-grained tokens based on the actor, the resource and the contextual data in which the authorization is being requested.

Try it now

Cloudentity comes with multi-tenant authorization server as a service.

Layered Defense-In-Depth

To access a resource, there should be capabilities to layer the defense to cut off resource access using a token at various layers. There should be capabilities to authorize the request of a token itself, then its usage at the edge perimeter of the resource and then next to workload/application. This layered defence approach at various levels will ensure a much tighter traffic flow, reducing the risk of unauthorized access. And these mechanisms should be as flexible as possible, like configurable policy-based rules instead of static and limited rulesets.

Least Privilege

We should also apply the principle of least privilege to tokens. Each token should only contain the necessary information and permissions required for the specific task or resource access, and nothing more. This means tailoring the contents of the token based on varying parameters that may include the actor, target resource, contextual data etc. And based on the contents of the token, there could be varying decisions made at resource access points using policies to use the least common denominator path decisions for resource access.

Micro Segmentation

With this principle, normally we divide the network and resources into smaller segments or compartments and the same can be conceptually expanded to applications, services etc. Each segment should have strictly defined resources, established ways to access, a set of policies/constraints/ access controls to limit the audience and only allow limited authorized tokens to interact with the resources within that segment.

Encryption and Secure Communication

Ensure the actor tokens are transmitted securely and only authorized parties get access to the tokens for further usage. Any sensitive information contained within the token must be properly encrypted using various mechanisms and can only be decrypted by parties requesting information. Providers also must be able to support mechanisms to limit token issuance and binding to specific applications using techniques like mTLS, DPoP etc.

Centralized Authorization Provider

The token provider that issues and validates tokens is recommended to be centralized. This provider can act as a central authority, provide visibility and governance and can also enforce access control policies consistently across the organization. There can also be patterns when an organization can be subdivided into LOBs or suborganizations and there can be independent token providers within each LOB and a trust established across all these individual authorization token providers in a standard way.

Monitoring and Analytics

Continuous monitoring and analysis of token-based activities are crucial in a Zero Trust architecture. Token providers should have mechanisms to easily communicate any detected patterns or events to other subsystems via integration points to mitigate potential threats. Token providers should also have the capability to stop minting new tokens based on data available to themselves or from external risk feeds. And corrective actions may include notifications to revoke and remove tokens, allowing other systems to build a token block list etc.

Eliminating the Barrier

Due to existing investment in legacy or outdated identity providers, there can be a significant entry barrier to push towards a modern authorization server that provides above features. To achieve Zero trust compliant architectures, you will have to invest in modern authorization servers that can mint tokens with fine-grained contextual awareness, continuously evaluate them and also at high scale to support the throughput requirements of all integrated applications/services etc.

A lot of providers lack these features and performance capabilities and tend to push organizations towards a less secure architecture pattern by cutting corners and satisfying only part of the features aligned to Zero Trust principles. So, it is pretty important to ask the right questions before investing in products that will not impose rate limits and force you to start cutting corners with application security by downgrading yourself to less secure application integration patterns. Make sure to ask some of the below questions to understand the product capabilities in detail before you make a product decision.

- Do you have mechanisms to trust identities from external identity providers?

- Do you have mechanisms to bind issued tokens to specific applications?

- Do you support signing key rotation?

- Do you support secure token exchange mechanisms?

- Do you have the capability to use data from external sources to evaluate token issuance criteria?

- Do you have capability to fine control contents within an issued token based on requested resource, target, actor etc.?

- Do you have mechanisms to perform external checks before issuing authorization tokens?

- What is your token minting latency and performance?

Summary

By combining token-based architecture with Zero Trust principles, organizations can establish a more secure and resilient system that limits the impact of potential security breaches. It provides a flexible and scalable approach to enforce access control and reduce the attack surface across your applications.

Cloudentity is a modern hyper-scale identity aware authorization service provider that provides all the capabilities at scale to build strong foundations to adhere to Zero Trust principles and enhance your Zero Trust investments and at a cost significantly less than most of the existing providers. You can find in detail how Cloudentity enables organizations to build a modern Zero Trust compliant architecture in our solution guides.